Should the Academic Community Trust Plagiarism Detectors?

You can also listen to this article as an audio recording.

Academic integrity is a serious issue in the research community. It ensures that the scientists remain dedicated to producing original research and ideas that lead to scientific breakthroughs. Therefore, they need to be diligent in keeping science honest and safeguarding it against misleading claims and academic dishonesty.

One way the scientific community can do this is by protecting science and its associated literature from plagiarism. There are many different types of plagiarism that are harmful in their own way. To protect against plagiarism, academic journals have started using plagiarism detectors that rely on algorithms. This has led to a debate about plagiarism, the use of plagiarism detectors, and the role of editors.

The Drawbacks of Plagiarism Detectors

Many researchers shared their experiences of journal rejection due to the reliance on plagiarism detector. Debora Weber-Wulff, a professor of media and computing at the HTW Berlin − University of Applied Sciences, thinks plagiarism detectors are a crutch and a significant problem. Journals are relying too much on them, rather translations or information taken from multiple sources. Furthermore, journals often rely on a single report generated by these plagiarism detectors. They rarely seek for a second opinion.

Weber-Wulff says that the reports are often difficult to interpret and, at times, incorrect. The scores produced, referred to as “originality scores” or “non-unique content”, could be difficult to analyze without a clear context. For example, they sometimes report false positives for common phrases, names of institutions, or references. In addition, the software cannot accurately detect plagiarism in cases of translations or information taken from multiple sources.

Another drawback is that plagiarism detectors simply point out text duplication. This means that they look for a string of three to five words that appears in another manuscript. In this sense, plagiarism detectors might fail at determining legitimate plagiarism.

The Need for Manual Detection

Bernd Pulverer, chief editor of The EMBO Journal, says that these systems “cannot capture the plagiarism of ideas, rehashing findings without attribution, or the use of figures or data without permission”. On the other hand, an editor can fix these issues. An editor reads a text and studies all parts of a manuscript for inconsistencies.

Jean-François Bonnefon, a behavioral scientist at the French Centre National de la Recherche Scientifique, shared his experiences with a plagiarism detector. He explained that a manuscript he submitted was rejected by detection software. However, it was not the content of his ideas or his research that was flagged. Instead, the flagged content included the methods, references, and author affiliations. This is a serious error but avoidable if an editor reviewed the manuscript. But, as Bonnefon said, “there is obviously no human in the loop here.” His statement is a testament of the limitations of plagiarism detectors. Taking the person (editor) out of the process gives full autonomy to a system that relies on algorithms.

Travis Gerke, of the Moffit Cancer Center in Florida, had a similar experience. The plagiarism report from a paper he submitted to one of Springer Nature’s journals primarily flagged the author list, citations, and standard language about patient consent. Gerke said it was “odd” that he had to explain how these sections of his paper were not plagiarized. Had an editor looked over the manuscript, he or she would understand that language about patient consent is standard for the entire scientific community.

Different Plagiarism Detection Software

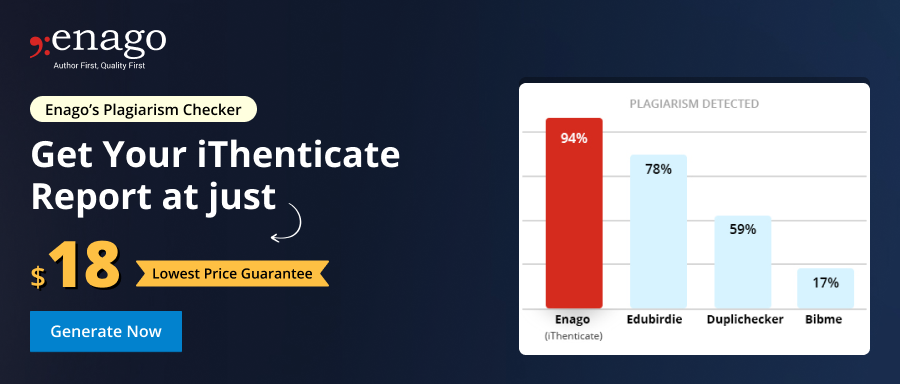

There are four main plagiarism detection tools used by academic journals. Each of these has its own unique features, but also its own limitations.

- Grammarly scans billions of web pages and draws from a large pool of academic resources.

- iThenticate uses an extensive database that includes close to 50 million works from 800 scholarly publishers.

- Plagscan uses a large database of academic and digital content.

- Crossref compares full-text manuscripts to an archive of nearly 40 million manuscripts and 20 million web sources.

The most prominent limitation in these systems is Non-Verbatim Plagiarism. This involves different approaches to rewriting a text, including “text laundering”. In a study on the plagiarism software used by Publication Integrity and Ethics (PIE), a British research ethics organization, “text laundering” is the method used to “trick” the plagiarism software. It involves changing the order of words, removing filler words, or simply using synonyms in place of the original words. Since plagiarism detectors do not analyze the content of the work, it fails to analyze plagiarized ideas or information.

Fixing a Broken System

Plagiarism is serious and is harmful. It distorts the work of scientists and researchers and impedes scientific progress. In addition, it could lead to a lack of interest in scientific fields. However, the academic community is trying to fix these issues.

Springer Nature has begun to incorporate mandatory editor reviews for submissions to their journals. Susie Winter, the director of communications at Springer Nature, recently explained the process to The Scientist. “Papers are first reviewed by a person, then checked using technology, and then checked by a person again…All decisions at Springer Nature are editorially led.” The inclusion of an editor to oversee the review of submissions should be mandatory. Firstly, it could help interpret the reports generated by the plagiarism detectors. Secondly, it might find and correct the errors in these reports.

Many publishers, including Elsevier and Springer Nature, have started programs that test complex artificial intelligence tools used to support the peer review process. This focuses on the AI program’s ability in identifying statistical issues or summarizing the paper by identifying its main statements. “These will turn out to be useful editorial tools,” Bernd Pulverer says. However, like most who share his sentiments, he does not feel that they, or any other plagiarism checker, will be as effective as an editor’s eye and expertise.

What are your experiences with plagiarism in academia? Have you dealt with an issue concerning plagiarism detectors and academic journals? Please share your thoughts with us in the comments section below.

What are your experiences with plagiarism in academia? Have you ever thought “Let me test my paper for plagiarism and turn it in a plagiarism checker” and then dealt with an issue? Please share your thoughts with us in the comments section below.