50% More Papers, Less Science? What Does This Mean For You as a Researcher?

In academic publishing, productivity has always been easy to measure. Count the papers, track the citations, and the numbers appear to tell a story of progress. But the rise of generative AI (GenAI) is forcing the research community to ask a more uncomfortable question: What if more papers do not necessarily mean better science?

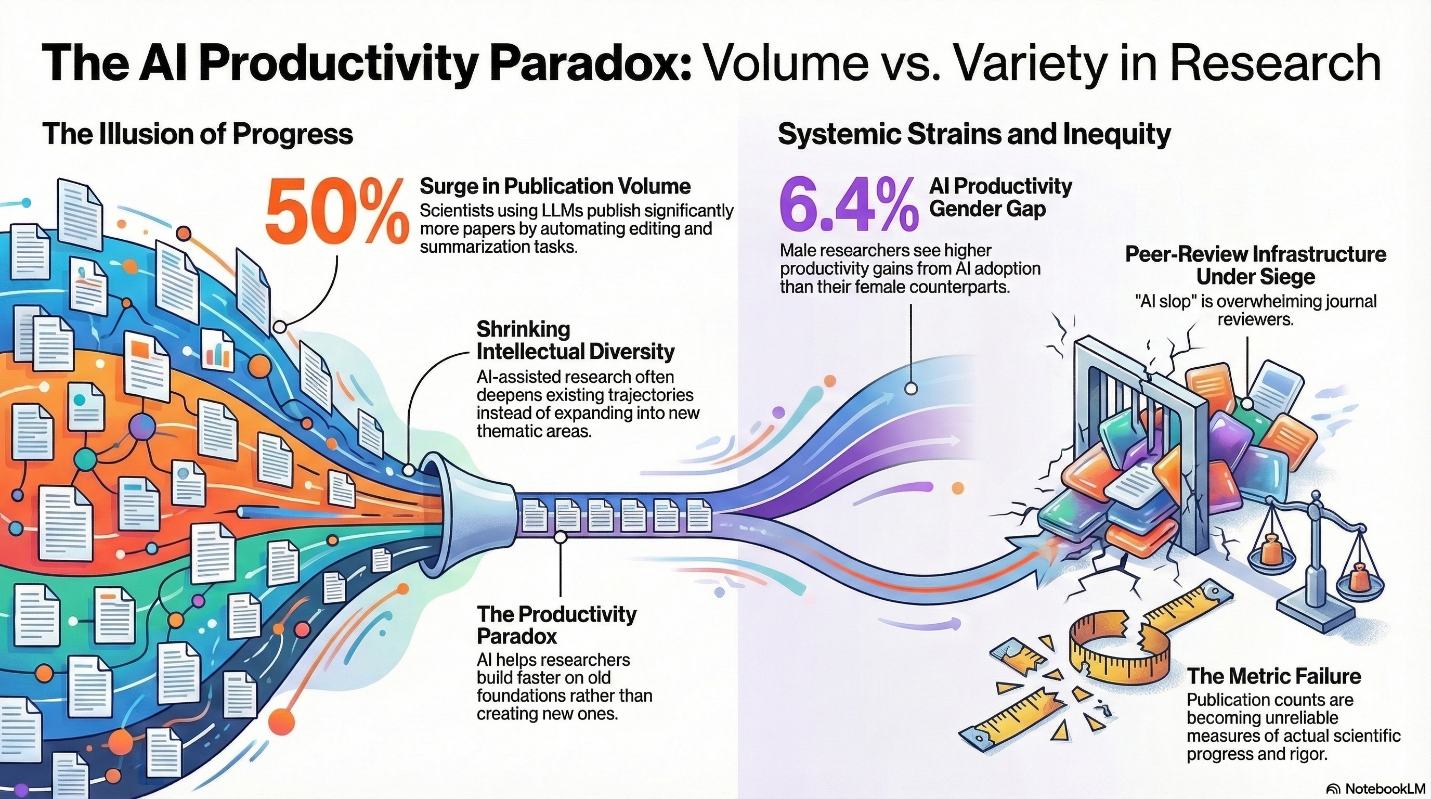

Evidence suggests that researchers using AI tools are publishing faster and in greater numbers than before. Yet alongside this surge in output, new concerns are emerging about narrowing research agendas, uneven benefits across researchers, and a growing strain on peer-review systems.

Measured Productivity Gains but Reduced Thematic Diversity

One of the clearest signals of AI’s impact on research productivity comes from a Cornell University study which found that scientists using large language models (LLMs) such as ChatGPT published up to 50% more papers than before.

From one perspective, this is a positive development. AI tools can streamline time-intensive tasks like editing manuscripts, identifying relevant citations, or summarizing prior literature. However, increased output does not necessarily translate to deeper or more meaningful scientific progress.

Large-scale analyses of scientific literature reveal a striking pattern. Researchers who adopt AI tools tend to publish more papers and receive more citations, but their work increasingly concentrates within narrower thematic areas. Instead of expanding the intellectual frontier, AI-assisted research often deepens existing trajectories.

Unequal Gains in the AI Era

Another dimension of the productivity paradox concerns who benefits from AI-driven productivity.

A study published in PNAS Nexus found that the productivity boost associated with AI adoption is 6.4% higher for male researchers compared with female researchers. This suggests that systemic disparities such as differences in access to resources, training, or institutional support may shape how effectively researchers can leverage AI tools.

Without careful attention, AI could amplify existing inequities in academia, benefiting groups already advantaged in research ecosystems. But unequal access to productivity gains is only part of the picture. As AI-assisted research output grows, concerns are also emerging about the quality and rigor of that expanding volume of work.

The Rise of “Productivity Without Rigor”

Editors and conference organizers are beginning to confront a more practical consequence of AI-driven productivity. Reports from within the publishing industry describe a noticeable rise in low-quality or partially AI-generated submissions (sometimes referred to informally as “AI slop”). These manuscripts are often linguistically polished but lack methodological rigor or conceptual novelty, placing additional strain on peer-review systems already operating at capacity.

This trend also creates significant pressure on peer-review infrastructure. As the volume of submissions rises, reviewers must spend more time filtering out weak or poorly verified work, potentially slowing the evaluation of genuinely novel research.

The result is a growing tension: AI improves writing fluency and productivity, but polished language can obscure weak scientific contributions, making it harder for editors and reviewers to distinguish meaningful research from superficial output.

Rethinking What Productivity Means in Research

The emerging evidence points to a paradox at the heart of AI-enabled research:

- Researchers can produce more papers faster

- Yet the collective intellectual diversity of science may shrink

- And the systems that evaluate research quality may become overwhelmed

None of this suggests that AI is inherently harmful to research. On the contrary, AI tools can democratize access to scientific communication, reduce language barriers, and accelerate routine tasks that previously consumed researchers’ time.

However, it strongly suggests that publication counts alone are becoming an increasingly unreliable measure of scientific productivity.

If success continues to be measured primarily by publication counts, AI may simply fuel the parti pris in academia: producing more papers to meet institutional expectations. In such a system, AI becomes a powerful amplifier of the “publish-or-perish” culture already shaping research careers.

What Should Researchers Do?

Responsible AI use in science therefore requires a fundamental shift in how research contributions are valued. The question is not how many additional papers AI can help you produce, but whether it enables you to:

- Ask meaningful scientific questions

- Add novel insights and expand knowledge

- Explore diverse and emerging research areas

- Strengthen methodological rigor and transparency

- Enhance equity in global research participation

The real risk is not that AI will change how research is produced, because it already has. The risk is that without clear standards, it will quietly redefine what counts as good science. But we understand that there is a gap in how these standards are perceived by researchers.

This gap is precisely what Enago’s Responsible Use of AI (RUAI) initiative seeks to address. By collating clear guidelines, standardized disclosure practices, and guiding stronger human oversight, it brings much-needed structure to an ecosystem currently marked by inconsistency and uncertainty. It recognizes that responsible AI use cannot be left to individual judgment alone, rather requires coordinated action across researchers, publishers, and institutions.

As AI continues to accelerate research output, the question is no longer whether we can produce more, but whether we can do so without compromising integrity, diversity, and trust. Responsible use of AI offers a path forward, not just to manage AI adoption, but to shape it deliberately, so that scientific progress is not only faster, but fundamentally better. Let us know if you need assistance in understanding publisher and university policies or in applying best practices for the responsible use of generative AI through the RUAI initiative.

Similar Articles

Load more