By: Enago

By: EnagoAI-Assisted Writing Makes Paper Sounds Better, But Does It Compromise Scientific Rigor?

Artificial intelligence (AI) has become a “behind-the-scenes” collaborator in scholarly writing. This growing reliance is highlighted in the recent Elsevier’s global survey of 3,000 researchers on how AI tools are now embedded across research workflows. Researchers reported using AI for literature reviews (51%), summarization (61%), data analysis and interpretation (38%), and drafting reports and manuscripts (38%). These figures indicate that AI is no longer peripheral—it is influencing how research findings are structured, interpreted, and presented.

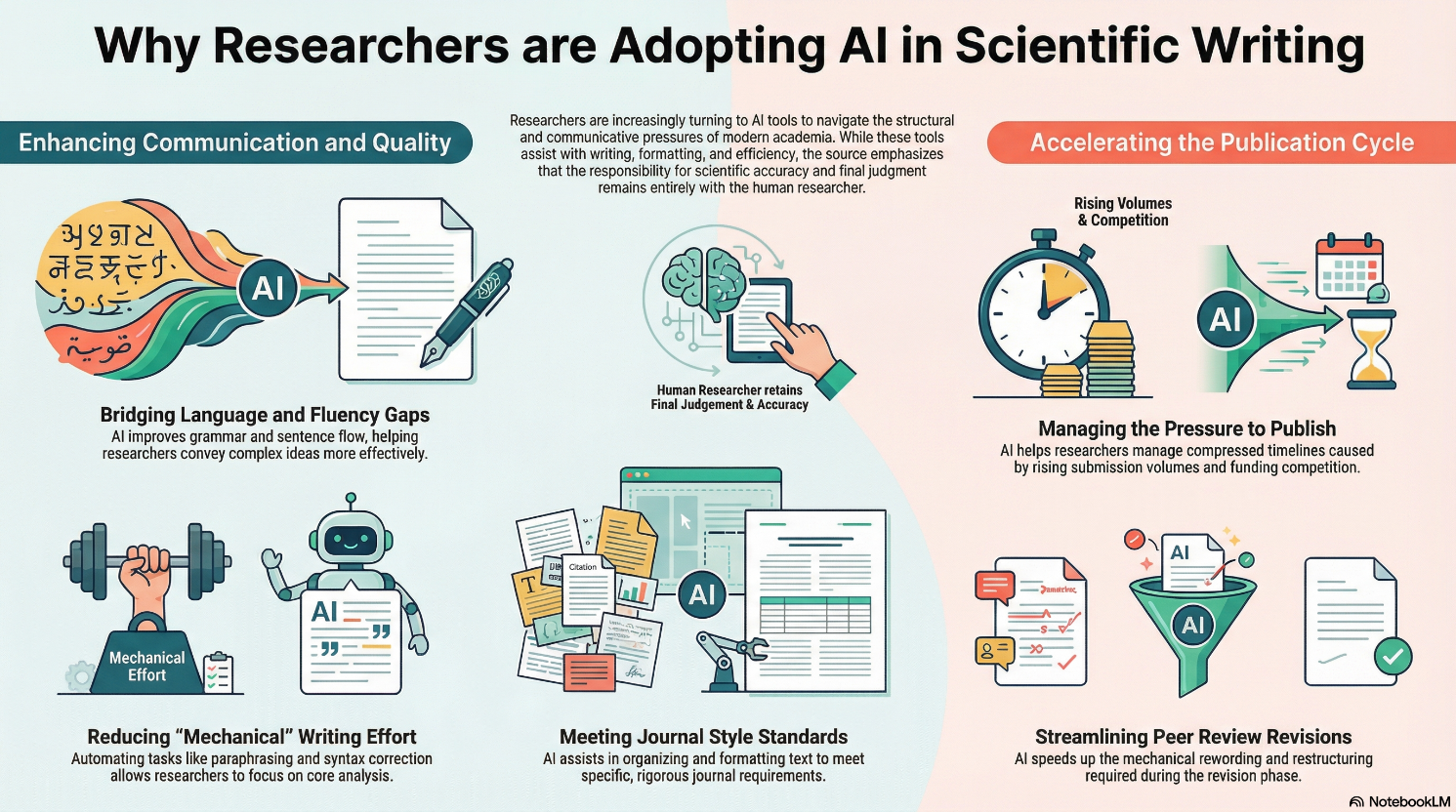

Why are Researchers Using AI Tools for Writing?

AI adoption in research writing is no longer marginal. A 2025 analysis of AI use in academic writing revealed that among researchers who reported using AI tools for manuscript preparation and language editing, 51% used them to improve readability, while 22% relied on them to check grammar. Furthermore, a study published in Nature Human Behaviour that analyzed more than 1.1 million preprints and published papers across platforms such as arXiv, bioRxiv, and Nature journals found that of the presence of large language models (LLMs)-modified content has steadily increased. By late 2024, evidence of LLM‑modified content has reached approximately 22% in some fields (e.g., computer science). These findings show that AI-assisted manuscript preparation is becoming increasingly common across research disciplines.

While AI can offer preliminary support for improving surface-level fluency and formatting, a quieter risk emerges: language that appears clearer, stronger, and more persuasive may no longer reflect the underlying science with the same fidelity.

Surface-Level Fluency Risking Foundational Integrity

When AI tools and/or LLMs are used without expert human review for writing and language refinement, they introduce a cluster of responsible AI risks. These include:

1. Loss of author voice

AI-assisted rewriting can subtly shift emphasis, restructure argument flow, or fix wordiness in ways that may reframe the author’s analytical intent. It may also introduce stylistic patterns—such as increased use of hedging and boosting verbs, frame markers, and attitude markers—that reshape how claims and interpretations are presented. In some cases, this can lead to an overemphasis on findings and conclusions while softening expressions of uncertainty, methodological limitations, or disciplinary nuance, thereby altering the positioning and interpretation of the research.

2. Amplify biases

AI models trained on large datasets may reproduce systemic cultural, gender, or disciplinary biases. A research at Harvard Medical School found that AI models analyzing pathology samples misclassified cases across certain racial, gender, and age groups, reflecting patterns in the training data rather than the sample characteristics.

3. Generate hallucinated or factually inaccurate data

AI tools can produce outputs that sound plausible but are factually incorrect or appear logically coherent even when evidentiary gaps or conceptual inconsistencies remain. A recent case documented by Retraction Watch revealed numerous fabricated AI-generated references in an article in the Journal of Academic Ethics. The incident highlights how over-reliance on AI without human verification can introduce errors that compromise the scholarly record.

Beyond concerns about factual accuracy, the Intensive Care Medicine case illustrates how the use of AI to enhance structural coherence can introduce such inaccuracies. The letter, later reported by Retraction Watch, appeared fluent and logically organized. However, it contained multiple non-existent references, including one supposedly from the same journal, which ultimately led to its retraction. This incident highlights how the use of GenAI for formatting and language improvement can weaken the underlying evidences.

4. Overstate claims

LLMs prioritize fluency and coherence over nuance, often strengthening assertions in statements that were originally cautious or uncertain. A study from Utrecht University found that leading chatbots routinely exaggerated scientific claims, potentially overstating results and masking uncertainty. Similarly, a study reported by University of California, Irvine on human perception of AI outputs reinforces this risk, showing that such responses can appear more convincing than warranted.

5. Alter methodologies

AI-assisted rewriting of technical sections can unintentionally alter methodological descriptions. A real‑world example is the retraction of a study published in Scientific Reports on explainable AI for autism diagnosis. A post‑publication investigation revealed multiple issues including inadequate methodological description, an AI‑generated nonsensical figure, undisclosed code, and unverified datasets, all of which affected confidence in the research’s integrity and conclusions.

Such cases threaten key research principles—accuracy, accountability, and author responsibility, demonstrating that fluently written AI outputs can mislead readers if not carefully reviewed.

Transparency, Editorial Judgment, and Disclosure

Well-polished AI generated text often can create an illusion of completeness, potentially giving readers the impression that the findings are stronger or more conclusive than what the underlying data indicate. In other words, language quality can unintentionally influence scientific judgment. This risk is illustrated by a 2025 retraction in Brain Research, where an article containing substantial scientific inaccuracies was retracted despite AI-assisted language improvements and editorial approval.

The risk increases when AI use is not transparently disclosed. Without disclosure, reviewers and readers cannot distinguish between the authors’ analytical decisions and AI-assisted phrasing. This raises concerns about:

- Transparency: It becomes difficult to know which parts of the manuscript reflect the authors’ judgments versus AI-generated phrasing.

- Robustness: Polished language may mask uncertainties, methodological limitations, or gaps in the data, threatening reproducibility.

- Accountability: Without explicit disclosure of AI involvement, responsibility for errors or overstatements becomes unclear.

These concerns make governance and disclosure policies central to responsible AI use in scholarly publishing.

While formal policies are increasingly in place, enforcement and interpretation remain uneven across journals and disciplines. This variation places greater responsibility on researchers to ensure transparent disclosure and careful human validation of AI-assisted content.

Responsible AI use in research publishing requires transparent disclosure of AI-assisted contributions, clear editorial guidance on evaluating AI-assisted content, consistent policy application, and sustained human oversight throughout the publication process. Tools like Enago disclosure statement generators can help authors prepare structured, transparent disclosure statements that support accountability in AI-assisted work.

As AI becomes more integrated into research workflows, the scholarly community must adopt clear principles for the responsible use of AI in scholarly publishing. Strengthening transparency, consistency, and human oversight will help ensure that AI supports, rather than compromising the credibility and integrity of scientific research.

Similar Articles

Load more

Frequently Asked Questions

Can I use AI to write my research paper?

+Yes. You can use AI to draft or edit parts of your research paper.

However, you are completely responsible for checking accuracy, references, and claims and must disclose AI use transparently.

What are the risks of over-relying AI for research writing?

+AI overreliance can introduce serious errors.

It may generate fake references, overstate findings, or alter methodological details. Therefore, all outputs must be reviewed and validated by the author before submission.

How should I disclose AI use in my research paper?

+Mention the AI tool’s name, its version (if applicable), and how it was used (e.g., language editing or summarization).

Check your target journal’s policy to confirm where the statement should appear; (it is usually in the Methods or Acknowledgments section). Tools such as the Enago AI Disclosure Generator can help create a complete and transparent declaration.

Does AI editing improve the research quality in a research paper?

+No. Quality of the research depends on study design, methodology, data accuracy, and valid conclusions. AI improves language clarity, not rigor.

It can fix grammar and structure. But it cannot verify data accuracy, methodology, or interpretation.

Do journals allow AI tools like ChatGPT for manuscript writing?

+Yes, with conditions. Many journals allow limited AI use for editing or drafting. However, AI cannot be listed as an author and authors must disclose how the tool was used.