Wiley Did a Study on GenAI Use. 3 Things Stood Out.

Generative AI is no longer experimental in research. It is already embedded in everyday workflows. However, widespread adoption is only a part of the story. What matters more is how researchers are recalibrating expectations, confronting risks, and redefining what responsible AI use requires in practice.

The latest ExplanAItions report from Wiley, based on insights from more than 2,400 researchers globally, captures this shift in operational use and expectations. The findings show a research community that is not only adopting AI rapidly but are also becoming more aware of its limitations and the governance and oversight mechanisms required to use it responsibly.

Three insights stand out. And together, they reveal a shift that goes far beyond adoption.

1. AI Adoption has Increased, But the Expectations are Being Recalibrated

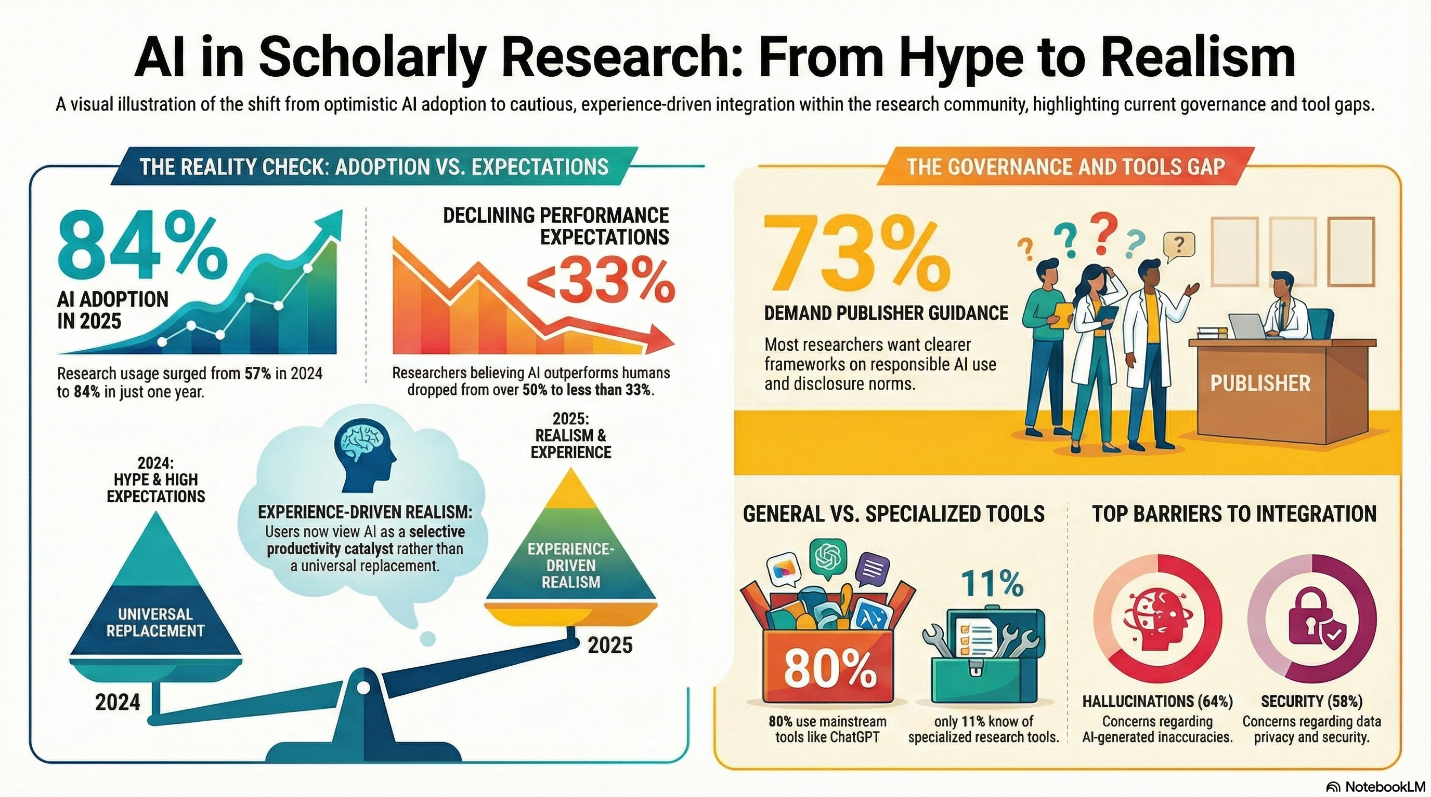

The scale of AI-adoption is striking. AI usage among researchers increased significantly from 57% in 2024 to 84% in 2025, with 62% actively using AI in research and publication tasks.

Yet the more important insight is what happened alongside this growth: expectations declined.

In 2024, researchers believed AI outperformed humans in over half of tested research use cases. In 2025, that figure dropped to less than one-third. This signals a critical shift from hype-driven optimism to experience-driven realism.

Interestingly, this pattern aligns with broader academic evidence. Large-scale analyses of preprints show that GenAI is accelerating workflows and improving productivity, but acting more as a selective catalyst than a universal replacement for human expertise.

This is a critical turning point. The responsible use of AI begins when users understand both its capabilities and limits. Unrealistic expectations lead to misuse; calibrated expectations enable meaningful integration.

The research community appears to be reaching that inflection point.

2. AI adoption is Outpacing Institutional Policy, Creating a Governance Gap

The second finding reveals a structural gap. While researchers are integrating AI into their workflows, institutional policy infrastructure remains insufficient.

According to the report, 57% (more than half) researchers cite lack of training and guidelines as a major barrier for AI use, and only 41% feel their organization provides adequate support. Most notably, 73% say publishers should provide clearer guidance on responsible AI use.

This confirms what many in scholarly publishing are already observing: researchers are using AI, but struggling to swim the ethical waters due to missing frameworks. Without clarity on acceptable use, disclosure norms, and verification practices, AI integration risks research integrity rather than strengthening it.

This is precisely where publishers, institutions, and research integrity leaders play a critical role.

3. Researchers are Heavily Relying on General-Purpose AI Tools, Raising New Integrity and Transparency Challenges

A structurally significant finding: researchers overwhelmingly rely on general-purpose AI tools rather than research-specific solutions.

Nearly 80% use mainstream AI tools like ChatGPT, while only one in four use specialized research-focused AI solutions. Most rely on freely available tools rather than institutionally supported systems. This gap is also fueled by unawareness, as only 11% of researchers on average having heard of the specialized research tools surveyed.

While accessibility has accelerated adoption, it has also amplified risks related to accuracy, security, and traceability.. Concerns about hallucinations and inaccuracies are being cited (64%) as a barrier to AI use, as researchers gain more experience with these tools. Moreover, growing concerns about information security and privacy (58%) is likely to hinder greater AI use.

These risks are not theoretical as recent analyses show that AI-assisted writing is increasing rapidly across research publications, while transparency remains extremely rare.

Responsible AI adoption requires infrastructure designed specifically for research, not just convenience-driven adoption of general tools. This includes tools and workflows that support transparency, verifiability, and ethical integration into the research lifecycle. Emerging tools, such as Enago’s AI Disclosure Statement Generator, are addressing this need by helping researchers document AI use in a structured and transparent manner, aligned with evolving publisher and institutional expectations.

As AI becomes embedded in everyday research workflows, transparency can no longer be treated as optional. It must become a standard part of the research process.

What these Findings Mean for the Future of Responsible AI in Research

Collectively, these findings reveal a deeper story.

The research community has moved beyond initial experimentation with GenAI. Researchers now understand both its value and its risks. They are using AI extensively, but also recognizing the need for clear guidance, governance, and responsible implementation. The conversation is no longer about whether and where researchers will use AI. It is about how AI use will be governed, disclosed, and verified.

This transition requires coordinated action across the research ecosystem:

- Publishers must define guidelines and develop standardized disclosure requirements

- Institutions must provide governance frameworks and training

- Researchers must apply human oversight and verify AI-assisted outputs

And increasingly, researchers are looking to publishers, institutions, and research integrity leaders to help define that path.

Enabling Responsible AI in Research: What happens next will define trust

The research community has already crossed the adoption threshold. AI is no longer a future capability. It is a present-day force shaping how knowledge is created, written, and disseminated.

But adoption alone does not determine impact. Responsibility does. The next phase of AI in research will not be defined by how powerful these tools become, but by how transparently and accountably they are integrated into the scholarly ecosystem. Clear disclosure standards, human oversight, and policy-aligned infrastructure will determine whether AI strengthens research integrity or introduces risks to research credibility.

This is a defining moment for publishers, institutions, and research integrity leaders. The choices made now—around disclosure, governance, and verification will shape whether AI strengthens research credibility or erodes trust in scientific outputs.

At Enago, we are committed to helping the global research community operationalize responsible AI use. Our Responsible AI initiative provides practical infrastructure—including standardized disclosure tools, governance frameworks, and expert-led workshops—to help researchers, publishers, and institutions integrate AI transparently and in alignment with emerging policy requirements.

Our interactive Responsible AI workshops equip research teams with the knowledge and workflows needed to implement compliant AI disclosures, establish oversight mechanisms, and strengthen accountability across the research lifecycle. Partner with Enago or book a Responsible AI workshop to build the policies, skills, and infrastructure required to ensure AI strengthens, rather than compromises research integrity.

Similar Articles

Load more

Frequently Asked Questions

How is generative AI transforming academic research workflows?

+Generative AI is accelerating multiple stages of the research lifecycle, including literature discovery, summarization, drafting, editing, and language refinement. Researchers report significant gains in efficiency, enabling faster manuscript preparation and improved clarity of scientific communication. However, human oversight remains essential to verify factual accuracy, interpret findings correctly, and ensure scholarly rigor.

Why is responsible AI governance important for researchers and publishers?

+As AI adoption accelerates faster than institutional policy development, governance provides the necessary framework to ensure AI strengthens rather than compromises research integrity. It includes clear disclosure standards, verification requirements, ethical usage guidelines, and training programs. Without governance, researchers face uncertainty about acceptable AI use, while publishers face challenges in maintaining transparency and accountability. Effective governance creates consistency, protects research credibility, and enables sustainable integration of AI into scholarly workflows.

What are the risks of using generative AI in scholarly publishing?

+The key risks include factual inaccuracies (hallucinations), lack of transparency in AI use, data privacy concerns, and unclear accountability. When researchers rely on general-purpose AI tools without structured disclosure or verification workflows, it becomes difficult for publishers and readers to assess the reliability and provenance of the work. Responsible AI use requires clear disclosure, human validation, and alignment with publisher and institutional policies to ensure credibility and trust in scholarly outputs.

How can researchers use AI responsibly while maintaining research integrity?

+Researchers can use AI responsibly by treating it as an assistive tool and maintaining full accountability for the final output. This includes verifying all AI-generated content, disclosing AI use transparently, protecting sensitive data, and following publisher and institutional guidelines. Increasingly, organizations like Enago provide structured tools, governance frameworks, and training workshops that help researchers implement compliant disclosure practices and responsible AI workflows.