Publisher AI Policies Have Changed. Your Disclosure Practices Probably Haven’t.

By 2026, generative AI has moved from novelty to norm in research workflows, but policy adoption has far outpaced researcher behavior. A large-scale analysis of more than five million papers found that around 70% of journals had adopted some form of AI policy, yet among 75,000 papers published since 2023, only 76 (about 0.1%) explicitly disclosed AI use. At the same time, publishers such as Springer Nature retracted thousands of articles in a single year due to integrity concerns like suspicious citations and, in some cases, undisclosed AI assistance.

For authors, this is no longer a theoretical ethics debate; it is a submission risk. In this piece, we map how AI policies evolved from 2023 through 2026, contrast restrictive and permissive policies, show how structured AI disclosure is emerging as a new norm, and outline a practical workflow to stay compliant.

Why AI Policies Matter More Than Ever

Across publishers and funders, a clear consensus has solidified: generative AI can be used, but its role must be transparent and humans must remain accountable. The International Committee of Medical Journal Editors (ICMJE), whose guidance underpins many medical and life-science journals, explicitly states that generative AI tools cannot be authors and that any AI assistance in manuscript preparation must be clearly disclosed.

Science and other AAAS journals go further, requiring detailed reporting of AI use, including tool names, versions, and even prompts in some cases. An early editorial in Science (“ChatGPT is fun, but not an author”) argued that AI-generated text conflicts with expectations of originality and authorship, a position that shaped many subsequent policies.

At the funder level, agencies such as the National Institutes of Health (NIH) and the National Science Foundation (NSF) have introduced strict safeguards. NIH guidance prohibits peer reviewers from uploading grant applications to AI tools, citing confidentiality risks, while NSF encourages disclosure of AI use in project descriptions but similarly bars reviewers from using unapproved AI systems. From September 2025, NIH has signaled that proposals substantially developed by AI may be subject to rejection, reinforcing and extending a trend that began with earlier 2023–2024 guidance.

The Three Policy Categories Every Author Should Recognize

Despite the variation between publishers, written AI policies in 2026 cluster into three recognizable categories. Knowing which category your target journal falls into is the single most useful piece of information you can have before submission.

1. Restrictive or "ban-first" policies

Some journals treat AI-generated text as fundamentally incompatible with originality and authorship. Early versions of Science's policy effectively restricted AI-generated text or figures in manuscripts, treating their use as a form of misconduct akin to image manipulation. The editorial "ChatGPT is fun, but not an author" set the tone for this stance and shaped many subsequent policies. Funders mirror this stance in critical processes such as peer review and grant evaluation.

Practical implication: If your target journal or funder is in this category, AI-assisted drafting can sink the submission even when disclosed. Verify the specific policy before using AI for anything beyond grammar and spelling.

2. Structured disclosure with guardrails

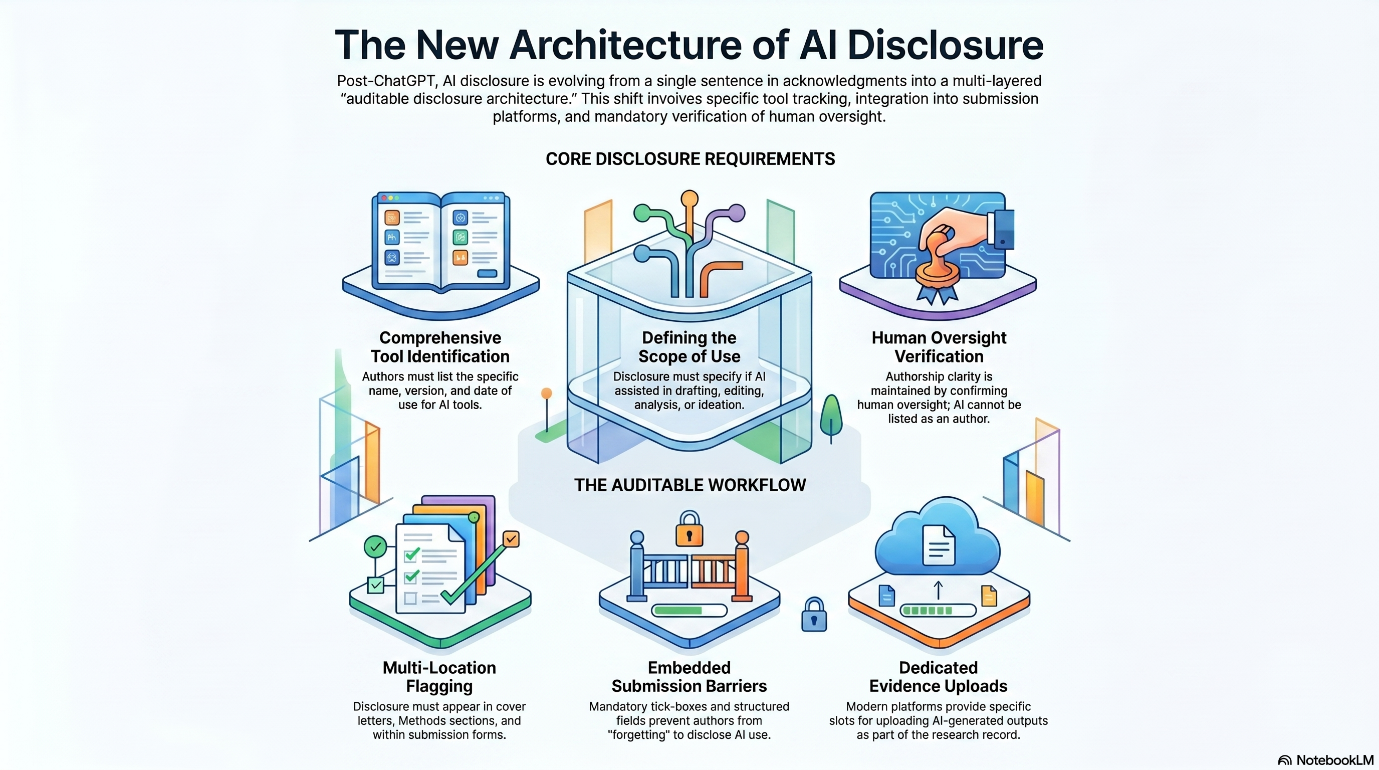

This is the dominant approach in 2026. Major publishers including Elsevier, Springer Nature, Wiley, Taylor & Francis, and SAGE permit AI use, but require detailed disclosure tied to specific manuscript locations. Elsevier's current policy, updated in September 2025, requires AI disclosure in a separate section before the references and explicitly prohibits AI image generation. The ICMJE requires AI use for writing assistance to be acknowledged in the acknowledgments section, and use for data collection or analysis to appear in the methods section.

Within this category, SAGE makes a useful distinction between assistive AI (refining your own text) which does not require disclosure, and generative AI (creating new content) which must be cited and referenced.

Practical implication: AI use is allowed, but unevenly. The disclosure requirements vary not just between publishers but between journals within the same publisher. Read the specific journal's author guidelines, not the publisher umbrella page.

3. Permissive but transparency-first policies

Some publishers like Taylor & Francis and SAGE explicitly permit generative AI for tasks like identifying research gaps, generating ideas, and refining language, provided use is declared, outputs are verified, and AI systems are not listed as authors. A 2025 framework by Resnik and Hosseini argues that disclosure should be mandatory only when AI use is "intentional and substantial," offering a more permissive interpretation that some journals have adopted.

The Committee on Publication Ethics (COPE) has also indicated that editors should not reject manuscripts solely on suspicion of AI use, and should focus instead on actual policy violations or other integrity problems.

Practical implication: These policies are the most forgiving, but they still require disclosure. The risk here is assuming that "permissive" means "no disclosure needed." It does not.

Quick reference

Category | Examples | Key requirement | Disclosure location |

|---|---|---|---|

| Restrictive | Science (early), NIH, NSF (peer review) | Limit or avoid AI for substantive content | Often inadequate; avoid use |

| Structured disclosure | Elsevier, Springer Nature, Wiley, Taylor & Francis, ICMJE journals | Disclose tool, version, purpose, location | Methods or Acknowledgments, before references |

| Permissive transparency | SAGE (assistive AI exempt), several humanities journals | Disclose if generative; verify all outputs | Methods, Acknowledgments, or cover letter |

The Retraction Risk Most Authors Are Missing

Here is the part that should change how you think about disclosure. In August 2025, COPE updated its retraction guidelines to explicitly include "undisclosed involvement of artificial intelligence" as grounds for retraction, alongside paper mills, identity theft, and fraud. This is a structural change. Failure to disclose AI is no longer just a policy breach. It is a misrepresentation that can justify pulling a published paper from the literature.

Now consider how widespread the gap between actual use and disclosed use already is. A 2024 study run by the American Association for Cancer Research, reported in Science, used AI detection tools on 7,177 manuscripts submitted to AACR journals between January and June 2024. The detector flagged AI-generated text in the abstracts of 36% of submissions. Only 9% of authors disclosed AI use when asked in the submission process.

The implication is uncomfortable: roughly three out of every four AI-using authors at AACR were technically in violation of their journals' disclosure requirements. Across the broader literature, a separate analysis of 25,114 biomedical manuscripts submitted to 49 BMJ journals found that only 5.7% disclosed AI use, while researcher surveys estimate actual usage between 28% and 76%.

That gap is now a retraction liability for any paper that makes it to publication. The earlier permissive era, where vague policies and inconsistent enforcement made non-disclosure a low-risk shortcut, is over.

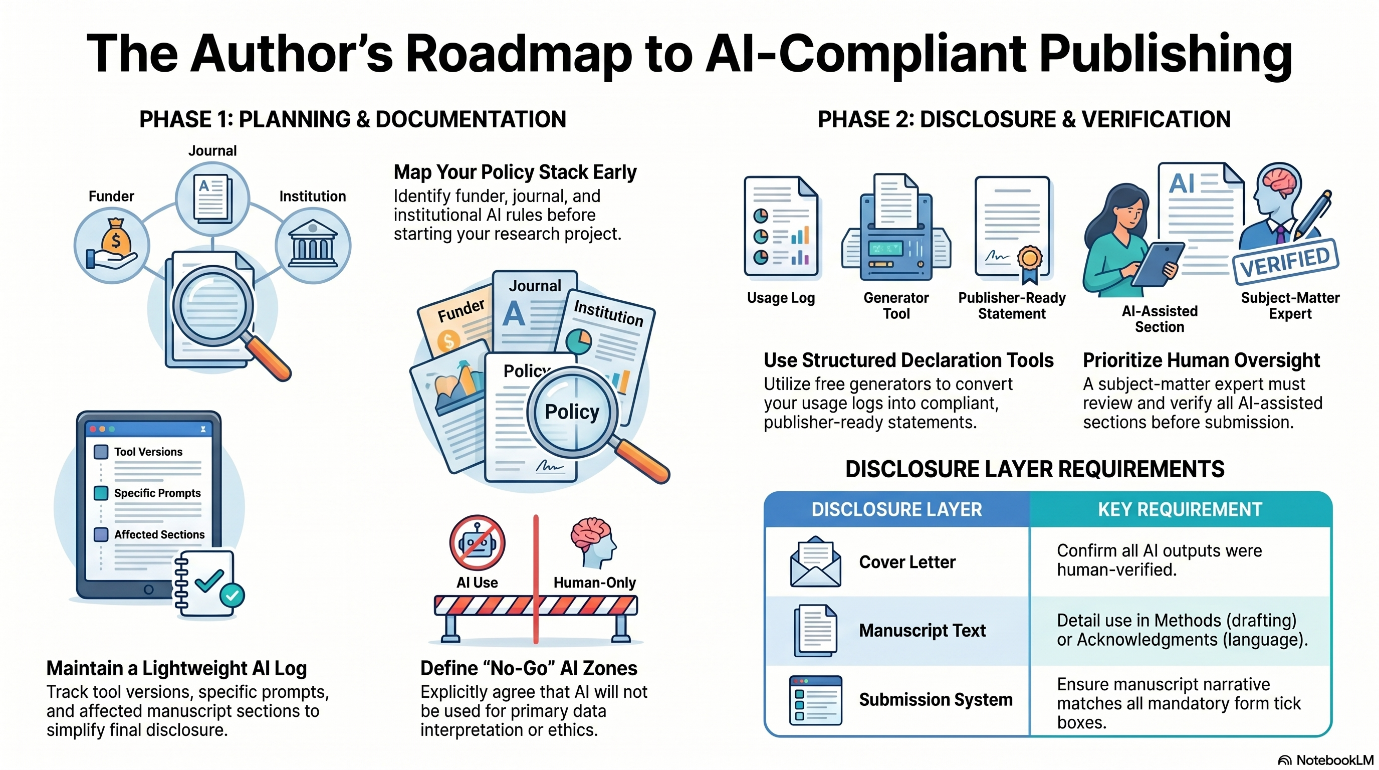

A pre-submission workflow that actually works

The following six-step workflow takes about 30 minutes and protects against the most common failure modes.

Step 1: Identify your target journal's category.

Look up the specific journal's AI policy, not just the publisher umbrella page. Determine whether it falls into restrictive, structured disclosure, or permissive transparency.

Step 2: Document AI use as you write, not after.

Maintain a simple log: which tool (e.g., ChatGPT, Claude, Gemini), which version, what you used it for (language editing, brainstorming, summarization, code generation), and which sections it touched. Reconstructing this at submission time is unreliable and error-prone.

Step 3: Verify everything AI touched.

AI tools hallucinate citations, fabricate statistical claims, and produce confident-sounding text that is factually wrong. Every reference, every numeric value, and every factual claim that AI generated or modified must be checked against a primary source. This step is non-negotiable.

Step 4: Draft your disclosure statement.

A compliant statement names the tool and version, describes the specific task, identifies the sections involved, and confirms human verification. A working template:

The authors used [tool name and version] to [specific task: e.g., improve the clarity and grammar of the introduction and discussion sections]. All AI-generated or AI-modified content was reviewed and verified by the authors, who take full responsibility for the integrity and accuracy of the manuscript.

Step 5: Place the disclosure in the right location.

Use the methods section if AI was used for data collection, analysis, or figure generation. Use the acknowledgments section for writing assistance. Include a brief mention in the cover letter regardless of where the formal disclosure appears, so editors are aware upfront.

Step 6: Confirm the journal's specific submission form requirements.

Many journals (BMJ, AACR, Elsevier titles) now have mandatory AI use questions in their manuscript tracking systems. Failing to answer these consistently with your in-manuscript disclosure can itself trigger an editorial query.

For authors who want a faster, structured way to produce compliant disclosure statements, Enago's AI Disclosure Statement Generator walks through the publisher-aligned fields and produces a ready-to-paste disclosure tailored to ICMJE and major publisher requirements.

What this means for your next submission

The publishers have changed the rules. Most authors have not caught up. The gap between actual AI use and disclosed AI use is the single biggest unaddressed integrity risk in academic publishing right now, and as of 2025, it is a documented basis for retraction.

Closing the gap is not difficult. It requires identifying the policy category before submission, documenting AI use as it happens rather than after, verifying every AI-generated output, and using a clear disclosure template in the right manuscript location. Thirty minutes of pre-submission compliance work protects a paper, a reputation, and a career.

For institutions building AI literacy among faculty and graduate students, the Enago Responsible AI Initiative provides structured guidance and resources designed to help researchers navigate publisher requirements with practical confidence.

Sources and References

1. He, Y. & Bu, Y. (2025). Academic Journals' AI Policies Fail to Curb the Surge in AI-assisted Academic Writing. arXiv preprint. https://arxiv.org/pdf/2512.06705

2. Brainard, J. (2024). Far more authors use AI to write science papers than admit it, publisher reports. Science. https://www.science.org/content/article/far-more-authors-use-ai-write-science-papers-admit-it-publisher-reports

3. Committee on Publication Ethics (2025). Retraction Guidelines, August 2025 update. https://publicationethics.org/guidance/guideline/retraction-guidelines

4. Committee on Publication Ethics (2025). 2025 Retraction guidelines update: key changes. https://publicationethics.org/news-opinion/2025-retraction-guidelines-update-key-changes

5. Elsevier (2025). Generative AI policies for journals (updated September 2025). https://www.elsevier.com/about/policies-and-standards/generative-ai-policies-for-journals

6. International Committee of Medical Journal Editors. Defining the Role of Authors and Contributors. https://www.icmje.org/recommendations/browse/roles-and-responsibilities/defining-the-role-of-authors-and-contributors.html

7. SAGE Publishing. Artificial intelligence policy. https://www.sagepub.com/journals/publication-ethics-policies/artificial-intelligence-policy

8. Resnik, D.B. & Hosseini, M. (2025). Disclosing artificial intelligence use in scientific research and publication: When should disclosure be mandatory, optional, or unnecessary? Accountability in Research. https://www.tandfonline.com/doi/full/10.1080/08989621.2025.2481949

9. BMJ Group cross-sectional study (2025). Authors self-disclosed use of artificial intelligence in research submissions to 49 biomedical journals. medRxiv. https://www.medrxiv.org/content/10.1101/2025.10.24.25338574.full.pdf

10. Retraction Watch (2025). New COPE retraction guidelines address paper mills, third parties, and more. https://retractionwatch.com/2025/09/04/new-cope-retraction-guidelines-address-paper-mills-third-parties-and-more/

Similar Articles

Load more