By: Enago

By: EnagoJournals want transparency. Prompt disclosure could be the key.

Artificial intelligence (AI), particularly large language models (LLMs), has become widely embedded in modern research workflows. According to Elsevier, AI tools are now routinely integrated into research workflows, with researchers reporting their use for literature reviews (51%), summarization (61%), data analysis and interpretation (38%), and drafting research papers or reports (38%). This reveals that AI systems are no longer peripheral aids; they increasingly shape how researchers explore literature, frame questions, analyze information, and communicate findings.

Recent studies have shown that prompts can influence AI-generated outcomes, as much as the model itself, demonstrating that what researchers ask is often as important as the system they use. This growing dependence on prompts raises a critical question for responsible AI use in research: If prompts significantly shape results, should they remain invisible?

Well-Designed vs. Vague Prompts: Why the difference matter

The quality of AI-generated output is greatly affected by the quality of the prompt provided. Consider the following examples:

Poorly designed prompts may introduce bias, oversimplification, or hallucinated claims, while well-refined prompts can significantly enhance accuracy.

This contrast sets the stage for a deeper question: When prompts shape analytical direction, should they remain undocumented?

Why Prompts Should be Treated as a Part of Research Methodology?

In traditional research, methods sections document how results were obtained. When AI tools contribute to data analysis, interpretation, or writing, prompts directly shape these outcomes. Ignoring them in methodological reporting creates a transparency gap. Prompts encode

- Research assumptions

- Scope limitations

- Inclusion and exclusion criteria

- Analytical framing

Hence, treating them as a component in the “methodology section” makes AI use auditable and bridges human-AI collaboration in reproducible science.

Prompt Sharing, Transparency, and Reproducibility

Transparency and reproducibility are cornerstones of robust research. Recent research shows that even small changes in prompts can significantly alter AI outputs, introducing a hidden variable that challenges reproducibility and validation.

Sharing prompts fosters reproducibility by revealing how AI generated results were derived. However, researchers typically disclose only the name of the AI tool and its version, and not detailed prompts that shaped key outputs. This creates a transparency gap: the most influential methodological layer guiding AI outputs is largely invisible. Without prompt disclosure, reviewers and peers cannot fully evaluate, replicate, or trust AI-assisted findings.

To illustrate this, the following examples demonstrate how different prompt structures lead to different outputs.

(Note: All examples were generated using the ChatGPT free version: ChatGPT 5 Mini, Date: 23 January 2026])

Scenario 1: Prompt variation in AI-assisted language correction

Two researchers use the same LLM for language correction on the same academic paragraph, but phrase their prompts differently.

- Researcher A’s prompt:

“Edit the following paragraph for grammar, spelling, and clarity. Do not change the meaning, intent, or academic tone.” - Researcher B’s prompt

“Change the following paragraph to improve readability, academic quality, and boost the research impact. Ensure the paragraph is more polished and impactful.” - Original paragraph given to the AI tool:

In ABCD-mutant lung cancer cells, gene expression is strongly shaped by the constitutive activation of the ABCD signaling pathway, which drives continuous downstream signaling even when there is no ligand. This results in increased transcription of cell proliferation genes, survival, and metabolic adaptation, like MYC, CCND1, and several anti-apoptotic factors. Simultaneously, feedback regulators such as DUSP and SPRY family genes are also up-regulated, but their inhibitory effects are often insufficient to fully dampen the pathway activity. Studies using RNA sequencing have shown that ABCD activation alters protein-coding genes and non-coding RNAs, which further complicates the regulatory landscape. However, the expression patterns could change based on the specific ABCD mutation and treatment context. This makes interpretation of these profiles challenging and not direct to compare with other experiments.”

Disclaimer: The above paragraph is hypothetical in nature and is intended solely for explanatory purposes. It does not represent real or validated research outcomes.

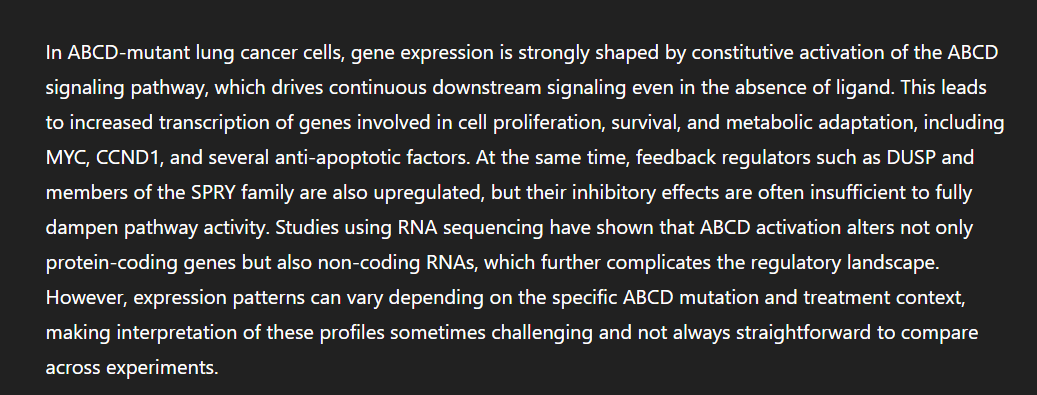

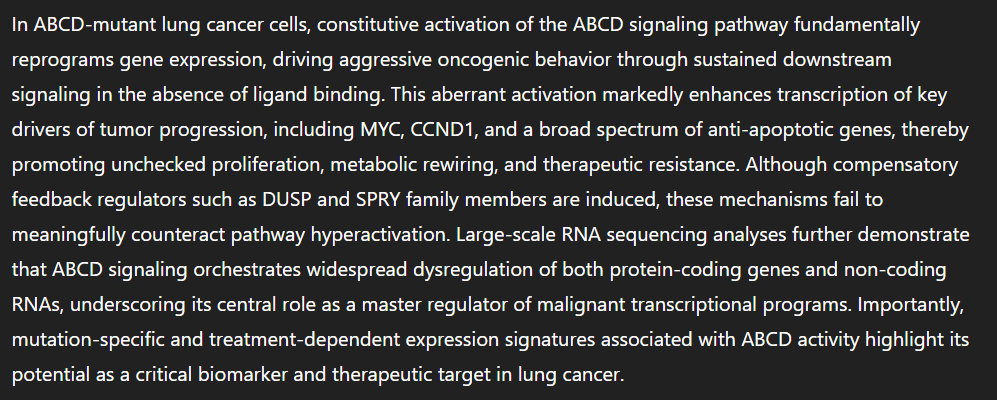

Output based on Researcher A’s prompt: (ChatGPT 5 Mini, Date: 23 January 2026)

Output based on Researcher B’s prompt: (ChatGPT 5 Mini, Date: 23 January 2026)

Scenario 2: Prompt variation in AI-assisted results analysis and trend interpretation

Two researchers use the same AI system to analyze the same dataset and identify trends. Although both intend to explore “overall patterns,” subtle differences in their prompts lead the AI to produce analytically different interpretations.

- Researcher A’s prompt

“Analyze this dataset and summarize the main trends in student performance over time. Keep the interpretation neutral and descriptive. Do not fabricate or hallucinate. Do not introduce data that is not a part of the shared dataset” - Researcher B’s prompt

“Identify significant trends, inequalities, and areas of concern in student performance over time from this dataset. Highlight notable changes and give explanations.” - Dataset Used (Hypothetical)

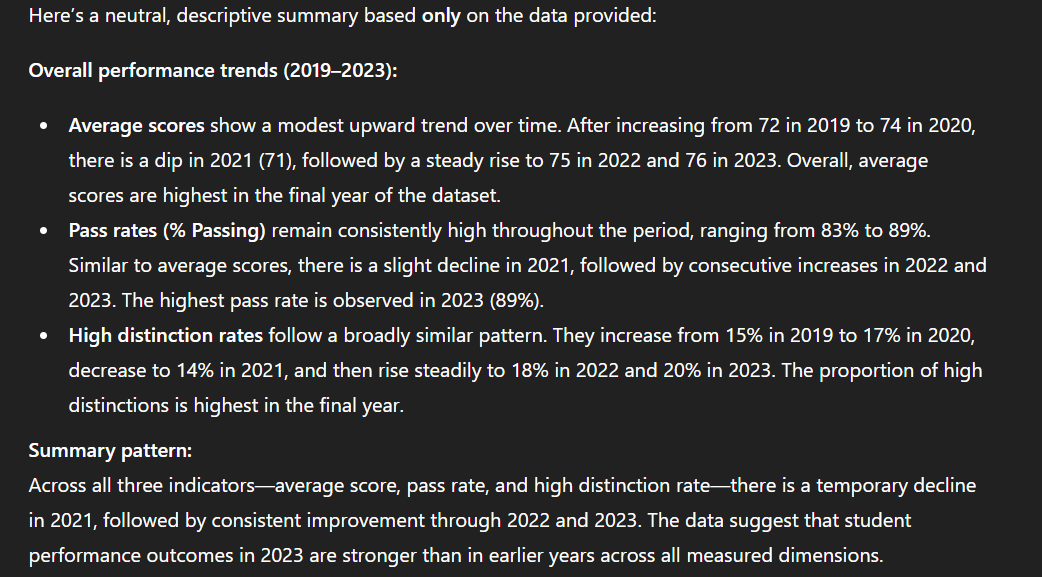

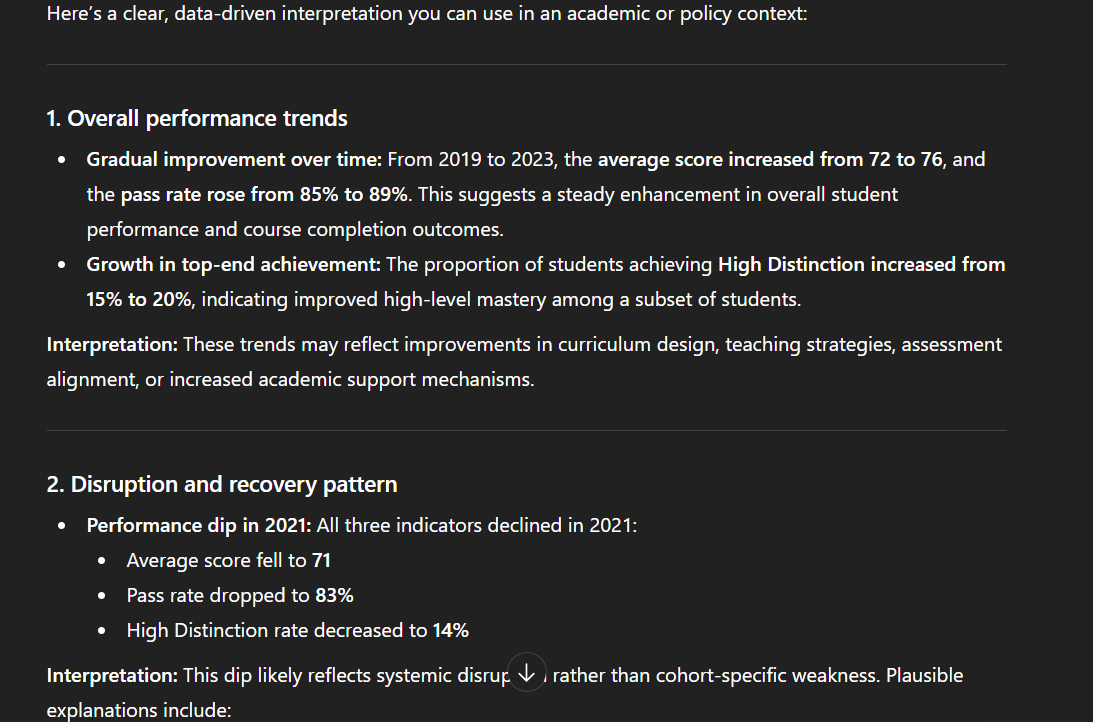

Output based on Researcher A’s prompt: (ChatGPT 5 Mini, Date: 23 January 2026)

Output based on Researcher B’s prompt: (ChatGPT 5 Mini, Date: 23 January 2026)

This example demonstrates that trend interpretation is mediated by prompt design rather than derived automatically from data alone. When prompt instructions are not disclosed, readers cannot determine:

• Why particular trends were emphasized over others,

• Whether interpretive claims reflect empirical patterns or prompt-induced framing, and

• The extent to which methodological decisions were embedded in prompt construction rather than transparently articulated by the researcher.

When such prompts are not disclosed, these differences are hidden from readers, reviewers, and collaborators, making it difficult to assess how AI systems influenced the research process. These conditions lead to several important implications for research integrity and reproducibility. A few of them are:

1. Reproducibility is undermined.

If prompts are not reported, other researchers cannot replicate or validate findings—even when using the same dataset and the same AI tool. The reasoning constraints, assumptions, and level of AI intervention embedded in the prompt remain invisible, preventing meaningful verification.

2. Hidden prompt differences can amplify bias and inequality.

Minor wording changes can steer AI systems to privilege certain regions, disciplines, languages, or writing norms. In language correction tasks, this may subtly standardize texts toward dominant academic styles or cultural frames, skewing representation and reinforcing systemic bias without researchers’ awareness.

3. Critical research decisions may be unknowingly influenced by AI.

Without prompt transparency, it becomes impossible to evaluate whether AI outputs shaped argument framing, emphasis, methodological interpretation, or conclusions in ways that were prompt-driven rather than evidence-driven. This limits peer reviewers’ and policymakers’ ability to judge the methodological soundness of AI-assisted work.

These examples reveal a harsh truth: without prompt transparency, AI-assisted research remains vulnerable to hidden biases and irreproducible results. This underscores the urgent need to scrutinize existing journal policies and their gaps in prompt disclosure.

Gaps in Current Prompt Disclosure Frameworks

Most journal policies currently require authors to disclose whether AI tools were used, but not how they were used.

Some guidelines encourage disclosure of prompts in the methods section, while some hint at prompt relevance indirectly, encouraging methodological transparency, without mandating prompt disclosure.

This creates a policy gap: prompts, despite being a key source of methodological influence, are often excluded from disclosure frameworks.

Why Prompt Disclosure Matter for Editors and Peer Reviewers

Addressing this gap is critical because prompt transparency allows editors and reviewers to:

- Evaluate reliability and rigor of AI-assisted content

Access to prompts allows reviewers to assess whether AI tools were guided by clear, appropriate, and methodologically sound instructions, supporting structured and reproducible outputs. - Identify the analytical constraints

Prompt access enables editors to understand how AI systems contributed to the generation or analysis of the results and to assess the extent and nature of human involvement across the research and writing process. - Assess potential bias introduced by prompt phrasing

Disclosure of prompts allows reviewers to evaluate whether biased language, selective framing, or outcome-driven instructions influenced the AI-generated content. - Identify potential cases of prompt hacking and prompt injection

Transparent disclosure of prompts help editors assess whether AI systems were instructed to bypass safeguards, fabricate information, or violate journal policies or responsible AI standards.

Without prompt disclosure, editorial and peer review is limited to evaluating the final output, missing the underlying methodological process. Journals can guide authors to submit prompts in supplementary materials or methods appendices, improving review quality.

Why Journal AI Policies Must Expand or Evolve

As AI becomes integral to research, journals must evolve from mere AI-use disclosure to AI-process transparency. Including prompts in disclosure can:

- Strengthen reproducibility

- Improve peer-review quality

- Reduce covert manipulation of AI outputs

Prompt disclosure is a critical step toward transparency, reproducibility, and research integrity in AI-assisted scholarship. By documenting how prompts shape outputs, researchers allow editors, reviewers, and peers to understand not just what AI generated, but why and how. This enhances methodological clarity and aligns AI use with ethical research practices.

As policies shift toward deeper transparency, researchers and institutions must adapt proactively—not reactively. If you’re preparing a manuscript, use Enago AI Disclosure Generator to create a clear, journal-ready statement that aligns with evolving publisher expectations. To stay ahead of broader policy changes and understand how prompt disclosure is reshaping publication standards, consider joining our Responsible AI workshops and webinar.

Similar Articles

Load more