Adapting to the AI Revolution in Research: What You Need to Know and Do

Generative AI has moved from novelty to normalcy in research workflows between late 2022 and early 2026. What began as cautious experimentation with LLMs and/or chatbots has evolved into widespread, often unspoken integration across everyday academic tasks.

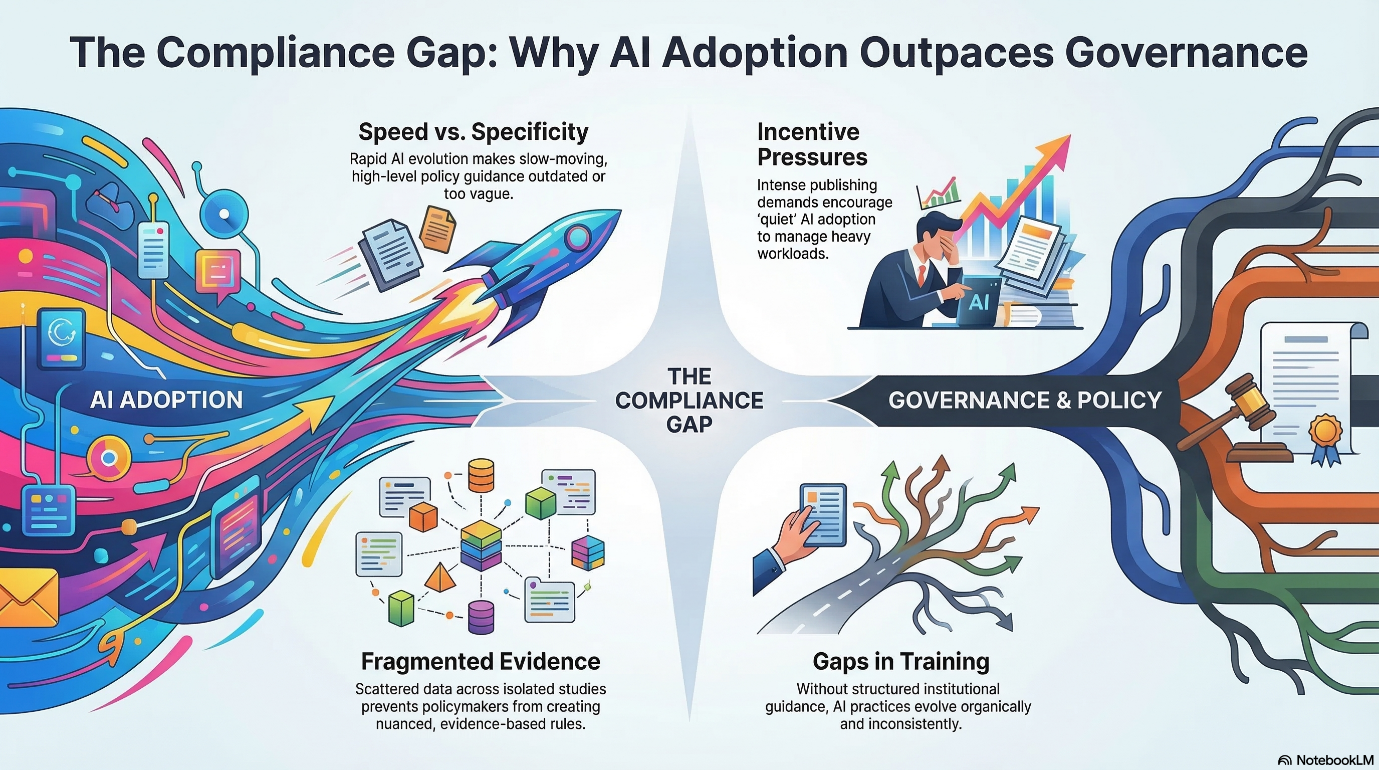

The shift from experimentation to routine use has progressed faster than policies meant to govern it. The result is a growing gap between “what researchers actually do” and “what institutions formally permit.”

For those focused on responsible use of AI in research, this gap is now the central issue. The question is no longer whether AI will be used, but how to align its widespread use with meaningful, enforceable standards.

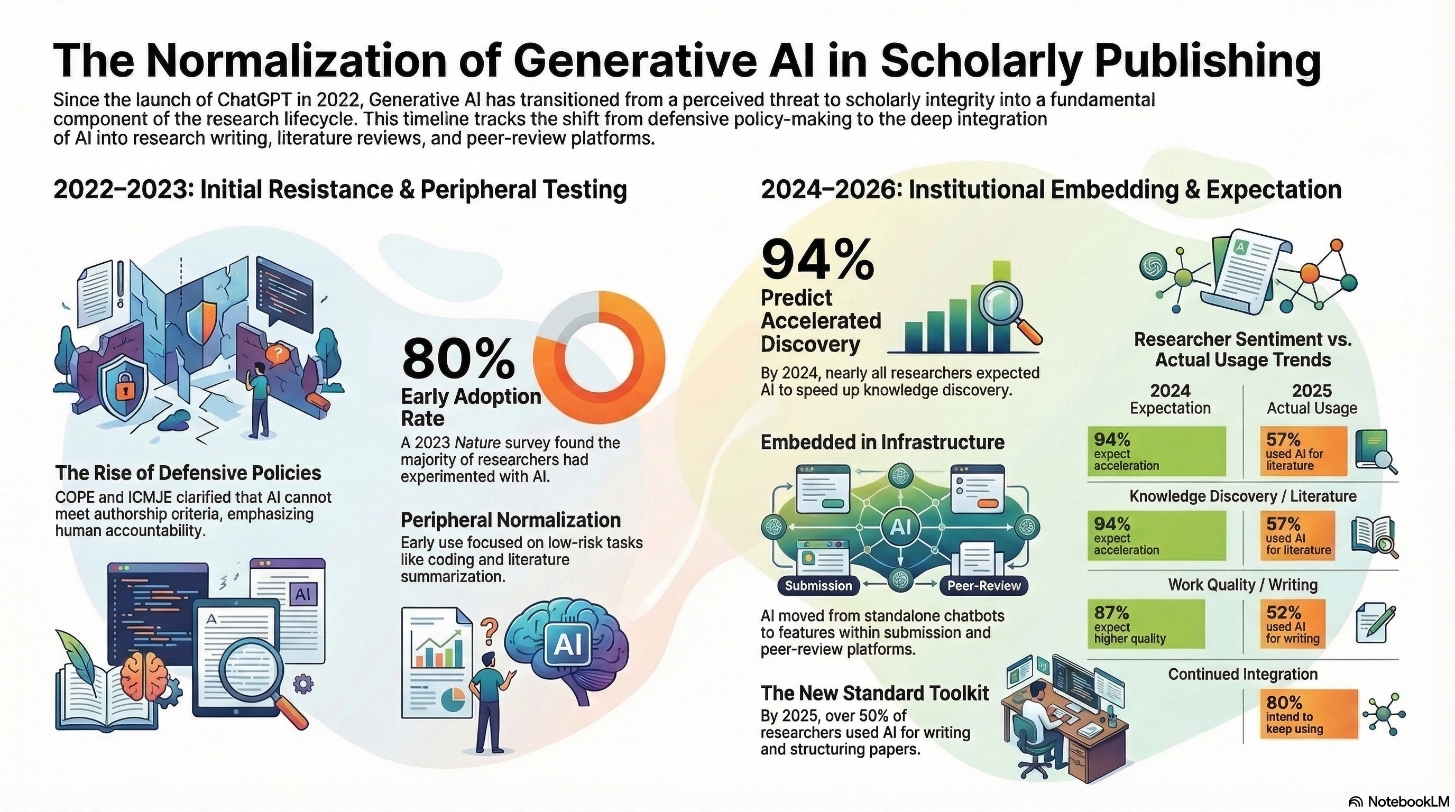

Early Reactions and Rapid Uptake (2022–2023)

Within weeks of the release of ChatGPT in November 2022, millions were experimenting with its ability to draft text, summarize papers, and generate ideas. Researchers also followed the trend by testing its utility across writing, coding, and literature review tasks.

Early reactions from academia and publishing highlighted concerns centered on plagiarism, hallucinated references, and the possibility of outsourcing intellectual work, prompting publishers and ethics bodies to move in defensive mode. The Committee on Publication Ethics (COPE) issued a 2023 position statement clarifying that AI tools cannot meet the criteria for authorship and that human authors remain fully responsible for any AI-assisted content. Furthermore, the International Committee of Medical Journal Editors (ICMJE) updated its Recommendations, requiring authors to disclose AI-based writing assistance in acknowledgements and reiterating that only humans should be named as authors.

Some journals went further, discouraging AI-generated text altogether. The Science family of journals, for example, clarified that its licence requires submitted work to be original and not written by tools such as ChatGPT. Others, including Elsevier and Springer Nature, permitted limited use of generative AI for language editing while requiring authors to disclose any substantive AI assistance and forbidding AI systems from being listed as authors. These updates positioned generative AI primarily as a threat to scholarly integrity, rather than as a tool that researchers might integrate into legitimate workflows.

At the same time, away from formal policy conversations, researchers were already beginning to fold AI into their everyday routines in quieter, more experimental ways.

Peripheral Normalization in Research Practice (2023–2024)

A 2023 online survey of 672 Nature readers found that around 80% had used ChatGPT or similar tools at least once; about 22% were using such tools frequently for tasks including summarizing literature, writing or debugging code. In other words, normalization of AI did not begin at the core of scientific reasoning, but at its periphery. This ensured that efficiency gains were immediate and least controversial.

A separate global survey conducted between December 2023 and February 2024 and published in Elsevier’s, “Insights 2024: Attitudes toward AI report”, based on nearly 3,000 researchers, clinicians, and academic leaders, found that:

- 94% of researchers believed AI would accelerate knowledge discovery.

- 87% expected AI to increase work quality overall.

- 85% anticipated it would free time for higher-value projects.

These numbers do not suggest universal, deep integration, but they clearly indicate a critical transition of placing generative AI on a fast upward trajectory from experiment to expectation.

When AI Became Embedded in Infrastructure (2024–2026)

By 2024, generative AI was no longer a standalone chatbot, it began to appear within the platforms researchers relied on. Springer Nature’s training team was already reporting that questions about generative AI had become a standard feature of their researcher workshops, rather than an occasional curiosity. In a poll of more than 3800 postdoctoral researches cited in the same piece, 31% had been using generative AI in their work. They also highlighted that the training for responsible-use lagged far behind this interest. Without clear institutional directions, researchers were left to develop their own practices.

By 2025, the picture had shifted from isolated experiments and training questions to wide‑scale, embedded use. Building on the 2024 workshop evidence, a 2025 survey conducted by Springer Nature (n = 2,021) found that 57% of researchers had used an AI tool to keep up with literature. 52% had used AI to help write and structure research papers; of those who had used AI tools, 80% intended to keep using them. Read along with Springer Nature’s own account of embedding AI into its peer‑review platform and other workflow tools, this suggests a second phase of normalization: AI is no longer just being tested at the edges, but is becoming part of the infrastructure that underpins how manuscripts are written, submitted and evaluated.

Normalization was more pronounced among early-career researchers. Tools like ChatGPT or AI-powered literature assistants are already part of the standard toolkit, used alongside reference managers by many graduate and postdoctoral researchers.

This reflects a deeper shift: AI has become staple in modern scientific research.

A Cultural Shift: From taboo to tool

Beneath policies and survey data lies a quieter, but critical cultural transition. In 2023, generative AI was often framed as a kind of academic shortcut, something to be monitored, restricted, or even avoided. Its use carried a degree of stigma.

By 2024–2025, however, that framing had softened. Publishers and platforms began describing AI as a way to expand human capabilities across research and publishing workflows, with human decision-making and integrity at its core.

In practice, AI support is widely considered legitimate for brainstorming, outlining, summarizing long texts and language polishing, while hypothesis formation, study design, data interpretation, and final argumentative framing are still viewed as non-delegable human responsibilities.

Policy: Consensus without operational clarity

One might expect policy to evolve in parallel. In reality, it has been uneven. There is robust consensus among major organizations that AI systems cannot be listed as authors; human oversight is required for any AI-assisted content; and AI use must be disclosed in line with journal requirements. Publisher policies echo these norms but operationalize them differently.

Many guidelines remain abstract, offering general directives without addressing real-world scenarios. What counts as acceptable assistance? Where is the boundary between editing and generation? How should disclosure be handled in practice?

At an institutional level, guidance emphasizes “responsibility” and “transparency” without really spelling out concrete use cases, practical boundaries, or scenario-based examples. The result is a familiar pattern: researchers adopt tools faster than institutions can define how they should be used.

Why Practice Is Outpacing Policy

Four factors help explain why AI adoption has moved faster than governance.

What You Need to Do for Responsible Use

If AI is already normalized in research practice, the challenge now is to normalize responsible use just as quickly.

For publishers, this involves:

- Continuing to harmonize AI policies around authorship, disclosure, and confidentiality, while communicating them clearly to authors.

- Embedding AI-use declarations directly into submission, publication workflows and peer review, so that disclosure becomes a routine element of scholarly practice.

For universities, research organizations, and funders:

- Clear, discipline-aware, and acceptable-use frameworks that map where AI can be and must not be used across tasks like hypothesis generation, data collection, analysis, and writing.

- Integrating AI literacy into graduate curricula and research integrity training, using realistic case studies.

- Human review and sign‑off should be mandated for high‑stakes tasks, such as final data interpretation, conclusions, and recommendations—and clearly identifying which stages of the research lifecycle can never be delegated to AI alone.

For individual researchers, the next phase of normalization means aligning daily practice with responsible-use expectations. This includes treating generative AI as a powerful but fallible assistant–one that must always be paired with human critical judgment, careful attention to privacy and security, and clear documentation of where and how AI tools were used across the research and writing process.

The real test for the research community now is whether governance, culture, and training can evolve quickly enough to ensure that this new routine strengthens, rather than erodes, the trust that supports scholarly communication.

Similar Articles

Load more

Frequently Asked Questions

How are researchers using AI tools like ChatGPT in academia?

+Researchers commonly use AI tools for:

- Summarizing research papers and literature reviews

- Drafting and editing academic manuscripts

- Generating and debugging code

- Brainstorming research ideas and outlines

However, critical tasks such as hypothesis development, study design, and data interpretation still require human expertise and cannot be delegated to AI.

How is AI changing the future of academic publishing?

+AI is transforming academic publishing by:

- Streamlining manuscript preparation and peer review

- Enhancing literature discovery and analysis

- Automating repetitive editorial tasks

- Improving research accessibility and dissemination

It is increasingly becoming part of the core infrastructure of scholarly publishing.

Is it ethical to use AI in academic research?

+Yes, but only when used responsibly. Ethical AI use in research requires:

- Transparency in disclosing AI assistance

- Human oversight and accountability

- Avoidance of plagiarism and fabricated content

- Protection of confidential and sensitive data

Organizations like COPE and ICMJE emphasize that researchers remain fully responsible for any AI-assisted work.

Can AI replace researchers in the future?

+No, AI cannot replace researchers. While it can enhance productivity and assist with routine tasks, it lacks critical thinking and scientific reasoning, ethical judgment, and domain expertise and contextual understanding

AI is best positioned as a collaborative tool that augments human intelligence.