By: Enago

By: EnagoRetractions are at an all-time high. How can you avoid it?

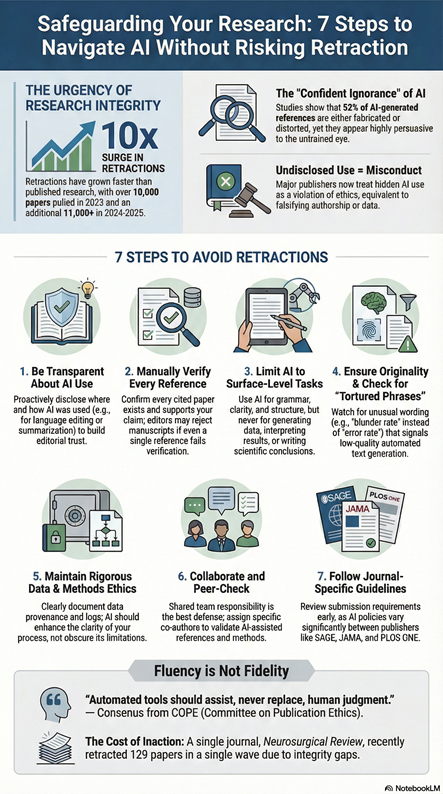

AI is increasingly embedded in research workflows—often faster than institutional safeguards can adapt. In 2025 alone, more than 200 papers were retracted or investigated due to undisclosed or inappropriate AI use, against the backdrop of a tenfold rise in retractions over the past two decades. In one notable case, Neurosurgical Review pulled 129 papers in a single wave. These cases reveal gaps in disclosure, verification, and peer-review safeguards, as manuscripts containing fabricated data, inaccurate citations, or residual system prompts progressed through editorial review without adequate detection.

For researchers who assume such failures are unlikely to affect their own work, the evidence suggests otherwise. Many of these cases followed a similar pattern: overreliance on automated text generation combined with insufficient human scrutiny, rather than any inherent flaw in the technology itself.

Why are Retractions at an All-Time High?

The rising number of retractions is not just a coincidence, it reflects a broader trend in research integrity. Analyses of The Retraction Watch database shows that more than 43,000 research articles have been retracted overall, with more than 10,000 retractions in 2023 alone, setting a new annual record. Building on this historical trend, Enago analysis indicates that a further 11,030 articles were retracted in 2024-2025, underscoring continued growth in retraction activity.

Importantly, this rise predates the widespread use of generative AI. As reported by The Scholarly Kitchen, retractions have increased faster than the overall volume of published research, leading to a higher retraction rate, not merely more retractions in absolute terms. By 2022, some fields exceeded 11 retractions per 10,000 published papers, compared with approximately 3.5 per 10,000 a decade earlier.

While recent headlines often focus on AI, most retractions remain unrelated to it and instead reflect longstanding challenges such as data integrity, authorship practices, and editorial oversight. AI has not created the retraction problem—but it is reshaping how quickly and invisibly existing weaknesses can scale.

How AI Amplifies Existing Integrity Risks

While many retractions still stem from traditional forms of misconduct and error, AI has rapidly become a major amplifier of the problem. Large language models can produce fluent, persuasive academic text, but fluency is not fidelity. Documented investigations and empirical studies show AI-generated manuscripts can:

- Include hallucinate references: A recent Retraction Watch analysis reported citations to non-existent papers or sources that did not substantiate the stated claims.

- Introduce data or image integrity risks: When figures or analyses are generated or modified without proper verification. Studies have shown that a significant number of published papers contain manipulated or inappropriate images, highlighting systemic verification challenges that can be amplified by AI use without oversight.

- Mask methodological weaknesses: AI-assisted language polishing can increase readability without improving study design, potentially making exploratory findings appear more robust than warranted. (Scientific Reports, 2025).

These issues arise not from the tools themselves, but from gaps in oversight and verification. Without careful human review, plausible-sounding AI outputs can slip into the scholarly record undetected.

What Happens When AI Use Goes Undisclosed?

Even unintentional AI use, when hidden, is treated as research misconduct. Publishers like IOP, Springer Nature, and Wiley now treat undisclosed AI as a violation of publication ethics—equivalent to falsifying authorship. Retractions don’t just erase papers; they stain reputations, funding eligibility, and institutional credibility.

How Common are AI‑Fabricated References, Really?

Disturbingly common. A 2024 study found that 52% of AI‑generated references were either fabricated or distorted. Fluent language masks false information, creating the illusion of accuracy. Editors like Melissa Kacena from the Indiana University School of Medicine have adapted—she rejects any manuscript where more than one reference fails verification. Could your paper survive that test?

What’s the Real Danger - Bad Writing or Bad Science?

Both. AI doesn’t “understand” science; it predicts text patterns. Even in expert‑reviewed topics such as Chiari malformation or gliomas, AI‑edited papers have been retracted after reviewers found hallucinated citations and distorted terminology. Fluent but flawed AI writing deceives editors, reviewers, and even authors—until it’s too late.

Isn’t AI Meant To Help Researchers?

Yes, but only under strict human control. When researchers let ChatGPT or other LLMs handle analysis, phrasing, or literature synthesis without oversight, AI can subtly alter meaning, omit key evidence, or introduce fake citations. Something that starts as “just editing” can grow into corrections or retraction notices, which can hurt the integrity of the publication and the reputation of the authors.

Could Detection Tools Protect Me From These Mistakes ?

Not entirely. AI‑detection software can flag unusual phrasing or repetitive syntax but cannot verify truth or source reliability. A human reviewer remains your only safeguard against AI’s most dangerous flaw - its confident ignorance. Even STM and COPE agree: automated tools should assist, never replace, human judgment.

How are Publishers and Universities Responding?

Global action is accelerating:

- Elsevier, Nature, JAMA, and PLOS ONE now demand explicit AI disclosure.

- Wiley requires authors to declare how AI influenced arguments or conclusions.

- Sage mandates human verification of every AI‑generated sentence and citation.

- Universities worldwide are embedding AI ethics training into their research curricula.

If your institution isn’t already doing this, it soon will because the cost of inaction is now measurable in retractions. To stay informed about evolving publisher policies and best practices, explore Enago’s Responsible AI Movement Page.

Safeguarding Your Research: 7 Steps to Navigate AI Without Risking Retraction

The path forward is not to abandon AI but to govern it wisely. Responsible AI use requires clear boundaries, verification workflows, and shared accountability across researchers, editors, and institutions.

The following section outlines 7 practical, implementable steps:

Disclosure: The above image is generated using Notebook LM for illustrative purpose.

Researchers who embed these practices early will not only reduce risk, but help define emerging standards of responsible AI use.

Is This Just Another Passing Ethics Trend?

No. These cases mark a permanent shift in how academic integrity is defined. As AI becomes integral to research, transparency and human oversight will increasingly determine credibility. The invitation is clear: join the growing community of researchers shaping the future of academic integrity by using AI openly, responsibly, and under human judgment. This shared commitment to responsible AI use is not about limiting technology, it is about protecting science from subtle, invisible forms of distortion as research enters a new era of automation.

Ready to safeguard your research?

Start by ensuring your AI use is transparent and compliant. Generate a journal-ready AI disclosure statement with our AI Disclosure Generator and stay aligned with evolving publisher policies before submission, not after retraction.

References

https://www.aapsnewsmagazine.org/aapsnewsmagazine/articles/new-page3/feb25/meetings-feb25

https://www.nature.com/articles/d41586-023-03974-8

https://pmc.ncbi.nlm.nih.gov/articles/PMC4702694/

https://www.nature.com/articles/s41598-025-31337-y

https://link.springer.com/article/10.1007/s44217-024-00333-1

Similar Articles

Load more